| Index | File | Line # | Class | Function |

|---|

| 0 | -- | | ferret\csHandler :: | errors(0=[8],1=[unserialize(): Error at offset 0 of 1 bytes],2=[/home/psycrit/site/mw/mediawiki-1.37.1/includes/filerepo/file/LocalFile.php],3=[1313],4=[array(1)]) |

| 1 | /home/psycrit/site/mw/mediawiki-1.37.1/includes/filerepo/file/LocalFile.php | 1313 | -- | unserialize(0=[0]) |

| 2 | /home/psycrit/site/mw/mediawiki-1.37.1/includes/filerepo/file/LocalFile.php | 1276 | LocalFile -> | loadMetadataFromString(0=[0]) |

| 3 | /home/psycrit/site/mw/mediawiki-1.37.1/includes/filerepo/file/LocalFile.php | 671 | LocalFile -> | loadMetadataFromDbFieldValue(0=[OBJECT class Wikimedia\Rdbms\MaintainableDBConnRef],1=[0]) |

| 4 | /home/psycrit/site/mw/mediawiki-1.37.1/includes/filerepo/file/LocalFile.php | 532 | LocalFile -> | loadFromRow(0=[OBJECT class stdClass]) |

| 5 | /home/psycrit/site/mw/mediawiki-1.37.1/includes/filerepo/file/LocalFile.php | 374 | LocalFile -> | loadFromDB(0=[0]) |

| 6 | /home/psycrit/site/mw/mediawiki-1.37.1/includes/libs/objectcache/wancache/WANObjectCache.php | 1700 | LocalFile -> | {closure}(0=[],1=[604800],2=[array(3)],3=[],4=[array(0)]) |

| 7 | /home/psycrit/site/mw/mediawiki-1.37.1/includes/libs/objectcache/wancache/WANObjectCache.php | 1532 | WANObjectCache -> | fetchOrRegenerate(0=[global:filerepo-file:pcrit_mw:1cfefd63ff87bdf6dee3991796d3dcbf4ce3f2bf],1=[604800],2=[OBJECT class Closure],3=[array(1)],4=[array(0)]) |

| 8 | /home/psycrit/site/mw/mediawiki-1.37.1/includes/filerepo/file/LocalFile.php | 421 | WANObjectCache -> | getWithSetCallback(0=[global:filerepo-file:pcrit_mw:1cfefd63ff87bdf6dee3991796d3dcbf4ce3f2bf],1=[604800],2=[OBJECT class Closure],3=[array(1)]) |

| 9 | /home/psycrit/site/mw/mediawiki-1.37.1/includes/filerepo/file/LocalFile.php | 733 | LocalFile -> | loadFromCache() |

| 10 | /home/psycrit/site/mw/mediawiki-1.37.1/includes/filerepo/FileRepo.php | 481 | LocalFile -> | load(0=[0]) |

| 11 | /home/psycrit/site/mw/mediawiki-1.37.1/includes/filerepo/RepoGroup.php | 177 | FileRepo -> | findFile(0=[OBJECT class Title],1=[array(1)]) |

| 12 | /home/psycrit/site/mw/mediawiki-1.37.1/includes/BadFileLookup.php | 71 | RepoGroup -> | findFile(0=[OBJECT class Title]) |

| 13 | /home/psycrit/site/mw/mediawiki-1.37.1/includes/parser/Parser.php | 2665 | MediaWiki\BadFileLookup -> | isBadFile(0=[DavisonRachlin2006_fig_1.png],1=[OBJECT class Title]) |

| 14 | /home/psycrit/site/mw/mediawiki-1.37.1/includes/parser/Parser.php | 2428 | Parser -> | handleInternalLinks2(0=['"`UNIQ--hide-00000000-QINU`"'

'"`UNIQ--hide-00000001-QINU`"'

* '''Title''': Behavior-centric versus reinforcer-centric descriptions of behavior

* '''Author(s)''':

* '''Date''': 16 July 2006

* '''Keyname''': 2006-07-16-Davison

* '''Responds to''':

* '''Lead-in''': The paper is a brilliant tour-de-force, but a subtext to the paper is what I will call the behavior-centric view. In this view, stimuli are remembered until a response is emitted, and reinforcers reach back in time to effect this response in the presence of the remembered stimulus...

no responses found

'"`UNIQ--h-0--QINU`"'Full Text

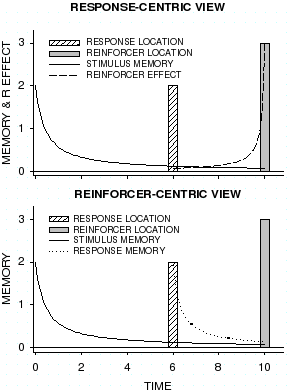

In a recent paper, Rachlin (2006) described how a discounting view pervades delay of reinforcement, memory, and choice. The paper is a brilliant tour-de-force, but a subtext to the paper is what I will call the behavior-centric view. In this view, stimuli are remembered until a response is emitted, and reinforcers reach back in time to effect this response in the presence of the remembered stimulus (see Figure 1, upper graph). As a conceptualization of understanding behavior I have no quibbles with this approach. But as a model of how an animal ''adapts'' to, or ''learns'' about, situations with stimulus-behavior delays and response-reinforcer delays, the model has the problem of reinforcer effects spreading backward in time. Physiologically, the process cannot act in this way, and physiology must require that the memory of an event flows forward in time, rather than the reinforcer effect flowing backwards. But the response-centric view is the dominant view in the study of delayed reinforcers (Mazur, 1987) and of self control (Green & Myerson, 2004).

A simpler, much more likely, and physiologically-consistent conceptualization of the adaptation to these delays is shown in Figure 1 (lower graph). This is the reinforcer-centric view. In this view, at the point at which a reinforcer is delivered, it is the conjunction of the memories of both the stimulus and the response at the time of reinforcer delivery that is “strengthened” and, I presume, remembered and subsequently accessed and used. This approach suggests a different, and more parsimonious, mechanism for learning and activity that is squarely based on memory. When reinforcers are delayed, it is the residual memory of responses times the value of the reinforcers that will describe the effects of reinforcer delay on behavior. When responses are delayed following stimuli, it is the residual memory of the stimulus times the value of the reinforcer that will describe the stimulus-reinforcer conjunction, providing a role for stimulus-reinforcer relations (as in momentum theory). It seems reasonable to assume that stimulus-behavior-reinforcer correlations are learned at the point of reinforcer delivery, and further, that, given the stimuli, behaviors and reinforcers are discriminable from other learned correlations, the presentation or emission of any of these engenders the learned correlation. Thus, behavior can be reinstated by presenting the reinforcer or the stimulus to the animal—and maybe by leading an animal to emit the response.

The reinforcer-centric view highlights important aspects of behavior that have been largely forgotten –for instance, while we generally accept that the discriminability of a stimulus from other stimuli will affect memory for that stimulus, the discriminability of a response from other responses will also have an effect (for a treatment of this, see Davison & Nevin, 1999; and Nevin, Davison & Shahan, 2005). Equally, the discriminability of this reinforcer from others will be critical, a possibility entertained by Davison and Nevin as the basis of the differential-outcomes effect. The mechanistic aspect of this conception leads, after training, to a correlation between events extended in time that describes steady-state behavior—but the molecular, mechanistic, aspect remains (and must do so) so that animals can learn to change their behavior in changed circumstances (see, for example, Krägeloh & Davison, 2003)—that is, the extended correlations must be able to change. The extended correlations predict steady-state behavior, the molecular aspects describes changes between steady-states. The molecular aspect is not absent in the steady-state, rather its significance is attenuated by learned correlations.

]) |

| 15 | /home/psycrit/site/mw/mediawiki-1.37.1/includes/parser/Parser.php | 1627 | Parser -> | handleInternalLinks(0=['"`UNIQ--hide-00000000-QINU`"'

'"`UNIQ--hide-00000001-QINU`"'

* '''Title''': Behavior-centric versus reinforcer-centric descriptions of behavior

* '''Author(s)''':

* '''Date''': 16 July 2006

* '''Keyname''': 2006-07-16-Davison

* '''Responds to''':

* '''Lead-in''': The paper is a brilliant tour-de-force, but a subtext to the paper is what I will call the behavior-centric view. In this view, stimuli are remembered until a response is emitted, and reinforcers reach back in time to effect this response in the presence of the remembered stimulus...

no responses found

'"`UNIQ--h-0--QINU`"'Full Text

In a recent paper, Rachlin (2006) described how a discounting view pervades delay of reinforcement, memory, and choice. The paper is a brilliant tour-de-force, but a subtext to the paper is what I will call the behavior-centric view. In this view, stimuli are remembered until a response is emitted, and reinforcers reach back in time to effect this response in the presence of the remembered stimulus (see Figure 1, upper graph). As a conceptualization of understanding behavior I have no quibbles with this approach. But as a model of how an animal ''adapts'' to, or ''learns'' about, situations with stimulus-behavior delays and response-reinforcer delays, the model has the problem of reinforcer effects spreading backward in time. Physiologically, the process cannot act in this way, and physiology must require that the memory of an event flows forward in time, rather than the reinforcer effect flowing backwards. But the response-centric view is the dominant view in the study of delayed reinforcers (Mazur, 1987) and of self control (Green & Myerson, 2004).

A simpler, much more likely, and physiologically-consistent conceptualization of the adaptation to these delays is shown in Figure 1 (lower graph). This is the reinforcer-centric view. In this view, at the point at which a reinforcer is delivered, it is the conjunction of the memories of both the stimulus and the response at the time of reinforcer delivery that is “strengthened” and, I presume, remembered and subsequently accessed and used. This approach suggests a different, and more parsimonious, mechanism for learning and activity that is squarely based on memory. When reinforcers are delayed, it is the residual memory of responses times the value of the reinforcers that will describe the effects of reinforcer delay on behavior. When responses are delayed following stimuli, it is the residual memory of the stimulus times the value of the reinforcer that will describe the stimulus-reinforcer conjunction, providing a role for stimulus-reinforcer relations (as in momentum theory). It seems reasonable to assume that stimulus-behavior-reinforcer correlations are learned at the point of reinforcer delivery, and further, that, given the stimuli, behaviors and reinforcers are discriminable from other learned correlations, the presentation or emission of any of these engenders the learned correlation. Thus, behavior can be reinstated by presenting the reinforcer or the stimulus to the animal—and maybe by leading an animal to emit the response.

The reinforcer-centric view highlights important aspects of behavior that have been largely forgotten –for instance, while we generally accept that the discriminability of a stimulus from other stimuli will affect memory for that stimulus, the discriminability of a response from other responses will also have an effect (for a treatment of this, see Davison & Nevin, 1999; and Nevin, Davison & Shahan, 2005). Equally, the discriminability of this reinforcer from others will be critical, a possibility entertained by Davison and Nevin as the basis of the differential-outcomes effect. The mechanistic aspect of this conception leads, after training, to a correlation between events extended in time that describes steady-state behavior—but the molecular, mechanistic, aspect remains (and must do so) so that animals can learn to change their behavior in changed circumstances (see, for example, Krägeloh & Davison, 2003)—that is, the extended correlations must be able to change. The extended correlations predict steady-state behavior, the molecular aspects describes changes between steady-states. The molecular aspect is not absent in the steady-state, rather its significance is attenuated by learned correlations.

]) |

| 16 | /home/psycrit/site/mw/mediawiki-1.37.1/includes/parser/Parser.php | 656 | Parser -> | internalParse(0=['"`UNIQ--hide-00000000-QINU`"'

'"`UNIQ--hide-00000001-QINU`"'

* '''Title''': Behavior-centric versus reinforcer-centric descriptions of behavior

* '''Author(s)''': [[:Michael Davison|Michael Davison]]

* '''Date''': 16 July 2006

* '''Keyname''': 2006-07-16-Davison

* '''Responds to''': [[:Notes on discounting|Notes on discounting]]

* '''Lead-in''': The paper is a brilliant tour-de-force, but a subtext to the paper is what I will call the behavior-centric view. In this view, stimuli are remembered until a response is emitted, and reinforcers reach back in time to effect this response in the presence of the remembered stimulus...

no responses found

'"`UNIQ--h-0--QINU`"'Full Text

In a recent paper, Rachlin (2006) described how a discounting view pervades delay of reinforcement, memory, and choice. The paper is a brilliant tour-de-force, but a subtext to the paper is what I will call the behavior-centric view. In this view, stimuli are remembered until a response is emitted, and reinforcers reach back in time to effect this response in the presence of the remembered stimulus (see Figure 1, upper graph). As a conceptualization of understanding behavior I have no quibbles with this approach. But as a model of how an animal ''adapts'' to, or ''learns'' about, situations with stimulus-behavior delays and response-reinforcer delays, the model has the problem of reinforcer effects spreading backward in time. Physiologically, the process cannot act in this way, and physiology must require that the memory of an event flows forward in time, rather than the reinforcer effect flowing backwards. But the response-centric view is the dominant view in the study of delayed reinforcers (Mazur, 1987) and of self control (Green & Myerson, 2004).

A simpler, much more likely, and physiologically-consistent conceptualization of the adaptation to these delays is shown in Figure 1 (lower graph). This is the reinforcer-centric view. In this view, at the point at which a reinforcer is delivered, it is the conjunction of the memories of both the stimulus and the response at the time of reinforcer delivery that is “strengthened” and, I presume, remembered and subsequently accessed and used. This approach suggests a different, and more parsimonious, mechanism for learning and activity that is squarely based on memory. When reinforcers are delayed, it is the residual memory of responses times the value of the reinforcers that will describe the effects of reinforcer delay on behavior. When responses are delayed following stimuli, it is the residual memory of the stimulus times the value of the reinforcer that will describe the stimulus-reinforcer conjunction, providing a role for stimulus-reinforcer relations (as in momentum theory). It seems reasonable to assume that stimulus-behavior-reinforcer correlations are learned at the point of reinforcer delivery, and further, that, given the stimuli, behaviors and reinforcers are discriminable from other learned correlations, the presentation or emission of any of these engenders the learned correlation. Thus, behavior can be reinstated by presenting the reinforcer or the stimulus to the animal—and maybe by leading an animal to emit the response.

The reinforcer-centric view highlights important aspects of behavior that have been largely forgotten –for instance, while we generally accept that the discriminability of a stimulus from other stimuli will affect memory for that stimulus, the discriminability of a response from other responses will also have an effect (for a treatment of this, see Davison & Nevin, 1999; and Nevin, Davison & Shahan, 2005). Equally, the discriminability of this reinforcer from others will be critical, a possibility entertained by Davison and Nevin as the basis of the differential-outcomes effect. The mechanistic aspect of this conception leads, after training, to a correlation between events extended in time that describes steady-state behavior—but the molecular, mechanistic, aspect remains (and must do so) so that animals can learn to change their behavior in changed circumstances (see, for example, Krägeloh & Davison, 2003)—that is, the extended correlations must be able to change. The extended correlations predict steady-state behavior, the molecular aspects describes changes between steady-states. The molecular aspect is not absent in the steady-state, rather its significance is attenuated by learned correlations.

[[Image:DavisonRachlin2006 fig 1.png|left|frame|413px|'''Figure 1'''. Two views of behavior. The upper graph shows the response-centric view, in which reinforcer effects spread backwards in time. The lower graph shows the reinforcer-centric view in which the memories of both stimuli and responses are correlated with the reinforcer value at the time of reinforcer delivery. R Effect is Reinforcer Effect.]]The situation is, of course, much more dynamic than depicted in Figure 1. Over time, many differing responses are emitted in many differing stimulus conditions, only some of which come to have a positive correlation with reinforcer (or punisher) delivery as learning develops. More highly discriminable responses and stimuli will, on this view, develop initially greater positive correlations.

Interestingly, the reinforcer-centric view changes nothing about the quantitative analysis discussed by Rachlin (2006). In particular, “self-control” and preference reversal over time still occurs.

There has been considerable debate recently as to whether the behaviour-environment relation is better understood as a molecular mechanistic process or as a process extended over time (Dinsmoor, 2001, and commentaries; Baum, 2002). While the present approach does not argue that the “termination of stimuli positive correlated with shock and the production of stimuli negatively correlated with shock [Dinsmoor, p. 311]” are positively reinforcing, it does make the suggestion, similar at face value, that memories for stimuli and responses are available at reinforcement or punishment. The present approach bridges temporal distance by

memory: it is the degraded memory that is temporally contiguous with the reinforcer or punisher, and it is the resultant size of the relative, and necessarily temporally extended correlation between these degraded memories that affects subsequent performance. This, I suggest, is what occurs during learning and adaptation to a new environment. But, the correlations being based on extended relations over time means that when adaptation has substantially occurred, it is these molar correlations that mostly (but not entirely) control behaviour. Which approach—molecular or extended—is best depends very much on whether we are interested in describing behaviour during learning and adaptation, or whether we wish to describe steady-state behaviour. In this I find myself in very substantial agreement with Hineline (2001) who argued that molecular and extended (molar) analyses are not mutually exclusive.

Perhaps we should cease telling our students that “reinforcers increase the probability of responses that they follow” but rather that “response probability is increased when responses are followed by reinforcers”.

Michael Davison

The University of Auckland, New Zealand

'"`UNIQ--h-1--QINU`"'References

* Baum, W. M. (2002). From molecular to molar: A paradigm shift in behavior analysis. ''Journal of the Experimental Analysis of Behavior, 78'', 95-116.

* Davison, M., & Nevin, J.A. Stimuli, reinforcers, and behavior: An integration. Monograph. ''Journal of the Experimental Analysis of Behavior, 71'', 439-482.

* Dinsmoor, J. A. (2001). Stimuli inevitably generated by behavior that avoids electric shock are inherently reinforcing. ''Journal of the Experimental Analysis of Behavior, 75'', 311-333.

* Green, L. & Myerson, J. (2004). A discounting framework for choice with delayed and probabilistic rewards. ''Psychological Bulletin, 130'', 769-792

* Hineline, P.N. (2001). Beyond the molar-molecular distinction: We need multiscaled analyses. ''Journal of the Experimental Analysis of Behavior, 75'', 342-347.

* Krägeloh, C. U. & Davison, M. (2003). Concurrent-schedule performance in transition: Changeover delays and signaled reinforcer ratios. ''Journal of the Experimental Analysis of Behavior, 79'', 87-109.

* Mazur, J.E. (1987). An adjusting procedure for studying delayed reinforcement. In M. L. Commons, J. E. Mazur, J. A. Nevin & H. Rachlin (Eds.), ''Quantitative analyses of behaviour, Vol. 5: The effects of delay and of intervening events on reinforcement value'' (pp. 55-73). Mahwah, NJ: Erlbaum.

* Nevin, J.A., Davison, M., & Shahan, T.A. (2005). A theory of attending and reinforcement in conditional discriminations. ''Journal of the Experimental Analysis of Behavior, 84'', 281-303.

* Rachlin, H. (2006). [[Notes on discounting]]. ''Journal of the Experimental Analysis of Behavior, 85'', 425-435.

'"`UNIQ--h-2--QINU`"'Acknowledgements

Thanks to William M. Baum, Douglas Elliffe, and J. Anthony Nevin for many productive conversations on these topics. The views expressed, though, remain my own and probably will continue so to be.]) |

| 17 | /home/psycrit/site/mw/mediawiki-1.37.1/includes/content/WikitextContent.php | 327 | Parser -> | parse(0=[

[[responds to::2006-Rachlin]]

[[keyname::2006-07-16-Davison]]

[[Title::Behavior-centric versus reinforcer-centric descriptions of behavior]]

[[author::Michael Davison]]

[[author/affil::The University of Auckland, New Zealand]]

[[cite/author::Davison 2006]]

[[Date::2006-07-16]]

[[author/ref::Davison]]

[[listing section::behavioral economics]]

[[lead-in::The paper is a brilliant tour-de-force, but a subtext to the paper is what I will call the behavior-centric view. In this view, stimuli are remembered until a response is emitted, and reinforcers reach back in time to effect this response in the presence of the remembered stimulus...]]

{{page/spec/response}}

==Full Text==

In a recent paper, Rachlin (2006) described how a discounting view pervades delay of reinforcement, memory, and choice. The paper is a brilliant tour-de-force, but a subtext to the paper is what I will call the behavior-centric view. In this view, stimuli are remembered until a response is emitted, and reinforcers reach back in time to effect this response in the presence of the remembered stimulus (see Figure 1, upper graph). As a conceptualization of understanding behavior I have no quibbles with this approach. But as a model of how an animal ''adapts'' to, or ''learns'' about, situations with stimulus-behavior delays and response-reinforcer delays, the model has the problem of reinforcer effects spreading backward in time. Physiologically, the process cannot act in this way, and physiology must require that the memory of an event flows forward in time, rather than the reinforcer effect flowing backwards. But the response-centric view is the dominant view in the study of delayed reinforcers (Mazur, 1987) and of self control (Green & Myerson, 2004).

A simpler, much more likely, and physiologically-consistent conceptualization of the adaptation to these delays is shown in Figure 1 (lower graph). This is the reinforcer-centric view. In this view, at the point at which a reinforcer is delivered, it is the conjunction of the memories of both the stimulus and the response at the time of reinforcer delivery that is “strengthened” and, I presume, remembered and subsequently accessed and used. This approach suggests a different, and more parsimonious, mechanism for learning and activity that is squarely based on memory. When reinforcers are delayed, it is the residual memory of responses times the value of the reinforcers that will describe the effects of reinforcer delay on behavior. When responses are delayed following stimuli, it is the residual memory of the stimulus times the value of the reinforcer that will describe the stimulus-reinforcer conjunction, providing a role for stimulus-reinforcer relations (as in momentum theory). It seems reasonable to assume that stimulus-behavior-reinforcer correlations are learned at the point of reinforcer delivery, and further, that, given the stimuli, behaviors and reinforcers are discriminable from other learned correlations, the presentation or emission of any of these engenders the learned correlation. Thus, behavior can be reinstated by presenting the reinforcer or the stimulus to the animal—and maybe by leading an animal to emit the response.

The reinforcer-centric view highlights important aspects of behavior that have been largely forgotten –for instance, while we generally accept that the discriminability of a stimulus from other stimuli will affect memory for that stimulus, the discriminability of a response from other responses will also have an effect (for a treatment of this, see Davison & Nevin, 1999; and Nevin, Davison & Shahan, 2005). Equally, the discriminability of this reinforcer from others will be critical, a possibility entertained by Davison and Nevin as the basis of the differential-outcomes effect. The mechanistic aspect of this conception leads, after training, to a correlation between events extended in time that describes steady-state behavior—but the molecular, mechanistic, aspect remains (and must do so) so that animals can learn to change their behavior in changed circumstances (see, for example, Krägeloh & Davison, 2003)—that is, the extended correlations must be able to change. The extended correlations predict steady-state behavior, the molecular aspects describes changes between steady-states. The molecular aspect is not absent in the steady-state, rather its significance is attenuated by learned correlations.

[[Image:DavisonRachlin2006 fig 1.png|left|frame|413px|'''Figure 1'''. Two views of behavior. The upper graph shows the response-centric view, in which reinforcer effects spread backwards in time. The lower graph shows the reinforcer-centric view in which the memories of both stimuli and responses are correlated with the reinforcer value at the time of reinforcer delivery. R Effect is Reinforcer Effect.]]The situation is, of course, much more dynamic than depicted in Figure 1. Over time, many differing responses are emitted in many differing stimulus conditions, only some of which come to have a positive correlation with reinforcer (or punisher) delivery as learning develops. More highly discriminable responses and stimuli will, on this view, develop initially greater positive correlations.

Interestingly, the reinforcer-centric view changes nothing about the quantitative analysis discussed by Rachlin (2006). In particular, “self-control” and preference reversal over time still occurs.

There has been considerable debate recently as to whether the behaviour-environment relation is better understood as a molecular mechanistic process or as a process extended over time (Dinsmoor, 2001, and commentaries; Baum, 2002). While the present approach does not argue that the “termination of stimuli positive correlated with shock and the production of stimuli negatively correlated with shock [Dinsmoor, p. 311]” are positively reinforcing, it does make the suggestion, similar at face value, that memories for stimuli and responses are available at reinforcement or punishment. The present approach bridges temporal distance by

memory: it is the degraded memory that is temporally contiguous with the reinforcer or punisher, and it is the resultant size of the relative, and necessarily temporally extended correlation between these degraded memories that affects subsequent performance. This, I suggest, is what occurs during learning and adaptation to a new environment. But, the correlations being based on extended relations over time means that when adaptation has substantially occurred, it is these molar correlations that mostly (but not entirely) control behaviour. Which approach—molecular or extended—is best depends very much on whether we are interested in describing behaviour during learning and adaptation, or whether we wish to describe steady-state behaviour. In this I find myself in very substantial agreement with Hineline (2001) who argued that molecular and extended (molar) analyses are not mutually exclusive.

Perhaps we should cease telling our students that “reinforcers increase the probability of responses that they follow” but rather that “response probability is increased when responses are followed by reinforcers”.

Michael Davison

The University of Auckland, New Zealand

===References===

* Baum, W. M. (2002). From molecular to molar: A paradigm shift in behavior analysis. ''Journal of the Experimental Analysis of Behavior, 78'', 95-116.

* Davison, M., & Nevin, J.A. Stimuli, reinforcers, and behavior: An integration. Monograph. ''Journal of the Experimental Analysis of Behavior, 71'', 439-482.

* Dinsmoor, J. A. (2001). Stimuli inevitably generated by behavior that avoids electric shock are inherently reinforcing. ''Journal of the Experimental Analysis of Behavior, 75'', 311-333.

* Green, L. & Myerson, J. (2004). A discounting framework for choice with delayed and probabilistic rewards. ''Psychological Bulletin, 130'', 769-792

* Hineline, P.N. (2001). Beyond the molar-molecular distinction: We need multiscaled analyses. ''Journal of the Experimental Analysis of Behavior, 75'', 342-347.

* Krägeloh, C. U. & Davison, M. (2003). Concurrent-schedule performance in transition: Changeover delays and signaled reinforcer ratios. ''Journal of the Experimental Analysis of Behavior, 79'', 87-109.

* Mazur, J.E. (1987). An adjusting procedure for studying delayed reinforcement. In M. L. Commons, J. E. Mazur, J. A. Nevin & H. Rachlin (Eds.), ''Quantitative analyses of behaviour, Vol. 5: The effects of delay and of intervening events on reinforcement value'' (pp. 55-73). Mahwah, NJ: Erlbaum.

* Nevin, J.A., Davison, M., & Shahan, T.A. (2005). A theory of attending and reinforcement in conditional discriminations. ''Journal of the Experimental Analysis of Behavior, 84'', 281-303.

* Rachlin, H. (2006). [[Notes on discounting]]. ''Journal of the Experimental Analysis of Behavior, 85'', 425-435.

===Acknowledgements===

Thanks to William M. Baum, Douglas Elliffe, and J. Anthony Nevin for many productive conversations on these topics. The views expressed, though, remain my own and probably will continue so to be.],1=[OBJECT class Title],2=[OBJECT class ParserOptions],3=[1],4=[1],5=[2696]) |

| 18 | /home/psycrit/site/mw/mediawiki-1.37.1/includes/content/AbstractContent.php | 548 | WikitextContent -> | fillParserOutput(0=[OBJECT class Title],1=[2696],2=[OBJECT class ParserOptions],3=[1],4=[OBJECT class ParserOutput]) |

| 19 | /home/psycrit/site/mw/mediawiki-1.37.1/includes/Revision/RenderedRevision.php | 263 | AbstractContent -> | getParserOutput(0=[OBJECT class Title],1=[2696],2=[OBJECT class ParserOptions],3=[1]) |

| 20 | /home/psycrit/site/mw/mediawiki-1.37.1/includes/Revision/RenderedRevision.php | 235 | MediaWiki\Revision\RenderedRevision -> | getSlotParserOutputUncached(0=[OBJECT class WikitextContent],1=[1]) |

| 21 | /home/psycrit/site/mw/mediawiki-1.37.1/includes/Revision/RevisionRenderer.php | 217 | MediaWiki\Revision\RenderedRevision -> | getSlotParserOutput(0=[main],1=[array(0)]) |

| 22 | /home/psycrit/site/mw/mediawiki-1.37.1/includes/Revision/RevisionRenderer.php | 154 | MediaWiki\Revision\RevisionRenderer -> | combineSlotOutput(0=[OBJECT class MediaWiki\Revision\RenderedRevision],1=[array(0)]) |

| 23 | -- | | MediaWiki\Revision\RevisionRenderer -> | MediaWiki\Revision\{closure}(0=[OBJECT class MediaWiki\Revision\RenderedRevision],1=[array(0)]) |

| 24 | /home/psycrit/site/mw/mediawiki-1.37.1/includes/Revision/RenderedRevision.php | 197 | -- | call_user_func(0=[OBJECT class Closure],1=[OBJECT class MediaWiki\Revision\RenderedRevision],2=[array(0)]) |

| 25 | /home/psycrit/site/mw/mediawiki-1.37.1/includes/poolcounter/PoolWorkArticleView.php | 137 | MediaWiki\Revision\RenderedRevision -> | getRevisionParserOutput() |

| 26 | /home/psycrit/site/mw/mediawiki-1.37.1/includes/poolcounter/PoolCounterWork.php | 162 | PoolWorkArticleView -> | doWork() |

| 27 | /home/psycrit/site/mw/mediawiki-1.37.1/includes/page/ParserOutputAccess.php | 281 | PoolCounterWork -> | execute() |

| 28 | /home/psycrit/site/mw/mediawiki-1.37.1/includes/page/Article.php | 691 | MediaWiki\Page\ParserOutputAccess -> | getParserOutput(0=[OBJECT class WikiPage],1=[OBJECT class ParserOptions],2=[OBJECT class MediaWiki\Revision\RevisionStoreRecord],3=[5]) |

| 29 | /home/psycrit/site/mw/mediawiki-1.37.1/includes/page/Article.php | 506 | Article -> | generateContentOutput(0=[OBJECT class User],1=[OBJECT class ParserOptions],2=[0],3=[OBJECT class OutputPage],4=[array(1)]) |

| 30 | /home/psycrit/site/mw/mediawiki-1.37.1/includes/actions/ViewAction.php | 80 | Article -> | view() |

| 31 | /home/psycrit/site/mw/mediawiki-1.37.1/includes/MediaWiki.php | 543 | ViewAction -> | show() |

| 32 | /home/psycrit/site/mw/mediawiki-1.37.1/includes/MediaWiki.php | 320 | MediaWiki -> | performAction(0=[OBJECT class Article],1=[OBJECT class Title]) |

| 33 | /home/psycrit/site/mw/mediawiki-1.37.1/includes/MediaWiki.php | 930 | MediaWiki -> | performRequest() |

| 34 | /home/psycrit/site/mw/mediawiki-1.37.1/includes/MediaWiki.php | 564 | MediaWiki -> | main() |

| 35 | /home/psycrit/site/mw/mediawiki-1.37.1/index.php | 53 | MediaWiki -> | run() |

| 36 | /home/psycrit/site/mw/mediawiki-1.37.1/index.php | 46 | -- | wfIndexMain() |